A history of… measuring time (Part 3)

We have 60 minutes in an hour because of ancient Babylonian maths...

In my last piece I left European time-keeping with clock-faces that dolorously (and often inaccurately) conveyed the time to any whose eyes happened upon them through the medium of a single hour-hand effortfully carving its way through a twelve-hour period. I promised you minutes. I have promised you minutes for a while now. So in this post I will give you minutes and, if you can bear it, even seconds…

In my first piece on time I explained how the duodecimal (base 12) system was probably an obvious choice for ancient peoples, rather than the base ten that we might otherwise think is instinctual. The three joints on each of our four fingers allowed for easy tallying up to the number 12, through the tapping of the thumb on the appropriate juncture. This twelve, when splitting both day and night, then logically led to the system of 24 hours with which we are all too familiar. That makes sense, or at least it makes a kind of sense – we can see how it came about. But having done so, what would then have motivated people to break those hours into a collection of sixty minutes? And then, further divide those each of those minutes into sixty seconds? Put simply, where the hell did the number sixty come into all of this?

To answer this question we have to go back a long way. A very long way, more than 5,000 years. The sexagesimal (base 60) system was first used in ancient Mesopotamia in accounting and calculation before, it seems likely, even the first cuneiform writing systems were developed. Quite why the number 60 was chosen is unclear, but it seems as though there are a couple of factors that come into play. The number 60 can be divided by 2,3,4,5,6,10,12,15,20, and 30 – in other words one can easily make useful factors from it. Another possible reason is that prior to its adoption both base 10 and base 12 were in use. Rather than select one over the other using base 60 is something of a compromise, being the lowest number that has each of them as a factor.

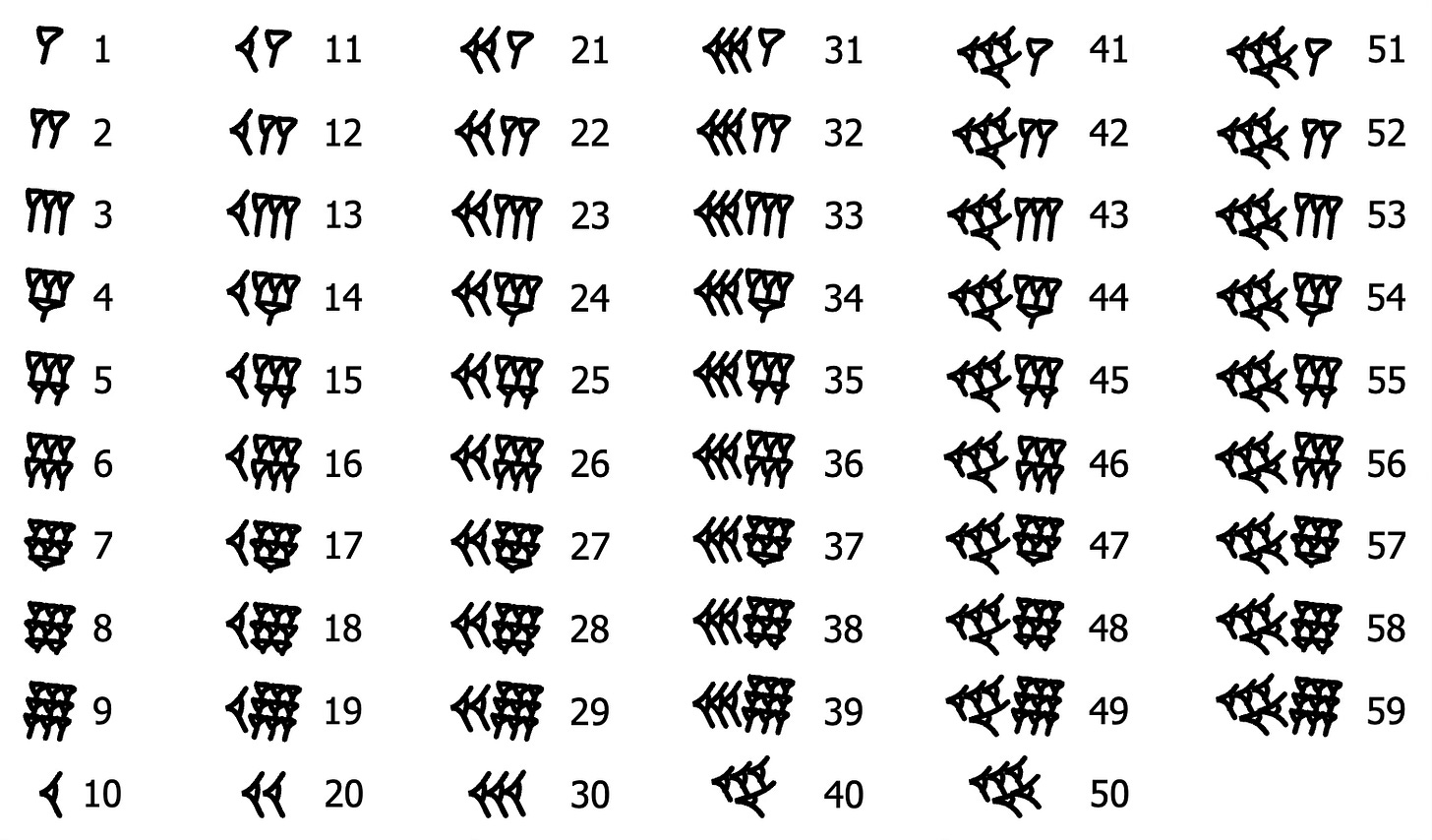

The system employed wasn’t a pure base 60 system (there were not 60 distinct symbols for the numbers) but to all intents and purposes it was used as one. The numbers were made up of a collection of different cuneiform marks:

Now it may seem to you that this was a cumbersome number system to use, but the Babylonians were incredibly adept at employing it. A clay tablet (with the catchy name YBC-7289) dating back an incredible 3,600-3,800 years shows it being deployed to calculate the square root of 2, accurately, to six decimal places. This has been described as “the greatest known computational accuracy… in the ancient world.” Furthermore the tablet is fairly small and round, and it is thought that it is something a student would have held in their hand – basically the equivalent of a notebook to be used in a lecture. Now without wishing to sound arrogant, I am pretty good at maths (“math” for our American readers) and I could work the same thing out with a pencil and paper, but I wouldn’t find it particularly easy and it would take me some time.

How though did we get from gifted mathematicians using clay tablets to having 60 minutes in an hour today? A key link in this chain is the Greco-Roman mathematician and astronomer Claudius Ptolemy (c. 100 – 160s/170s). He used the ancient Babylonian system of base 60 (likely because of its easy divisibility)1 to subdivide degrees into 60 parts, and thence 60 smaller parts. He describes this in his work Almagest which was translated into Arabic in the 9th century (for use by Arabic astronomers) before being translated into Latin in the 12th century. We know that a Latin translation by Gerard of Cremona existed by about 1175, and in it we have (amongst others) this line:

…ergo, quia iam ostensum est quod chorda AB est cifre et 47 minuta et 8 secunda secundum quantitatem qua diameter est 120, erit chorda AG minus parte una et duobus minutis et 50 secundis secundum quantitatem illam, tertiis pretermissis…

…therefore, since it has already been shown that chord AB is zero and 47 minutes and 8 seconds, according to the scale in which the diameter is 120, chord AG will be less by one part and 2 minutes and 50 seconds on that same scale, the thirds being omitted…

The “minutes” and seconds come from the Latin “partes minutae primae” and “partes minutae secundae” meaning “first diminished parts” and “second diminished parts”. Each of those original phrases got shortened in a different way over time. The first to “minutae” giving us “minutes” and the second, to, you guessed it “secundae” giving us “seconds”. Okay, so Ptolemy was using minutes and seconds, but that was to divide angles and suchlike, not time – who first decided to split up hours in the same manner?

The earliest known person doing so was the Khwarazmian Iranic scholar and polymath Abu Rayhan Muhammad ibn Ahmad al-Biruni (c. 973 – c. 1050). We find evidence of this in The Chronology of Ancient Nations (al-Āthār al-bāqiya), written around the year 1,000:

He takes that portion of hours, minutes, seconds, etc. which corresponds in the tables to each of these numbers of great and small cycles

It would, however, be another 400 or so years before such divisions could be practically used in timekeeping because, as we learned in the last piece, early mechanical clocks were simply not that accurate. Not only were they not very good at keeping time, they were also big - they consisted of a drum from which a weight on a rope would hang, that would slowly turn as the weight descended. The game-changer in both clock size and accuracy came with the invention of the spring clock.

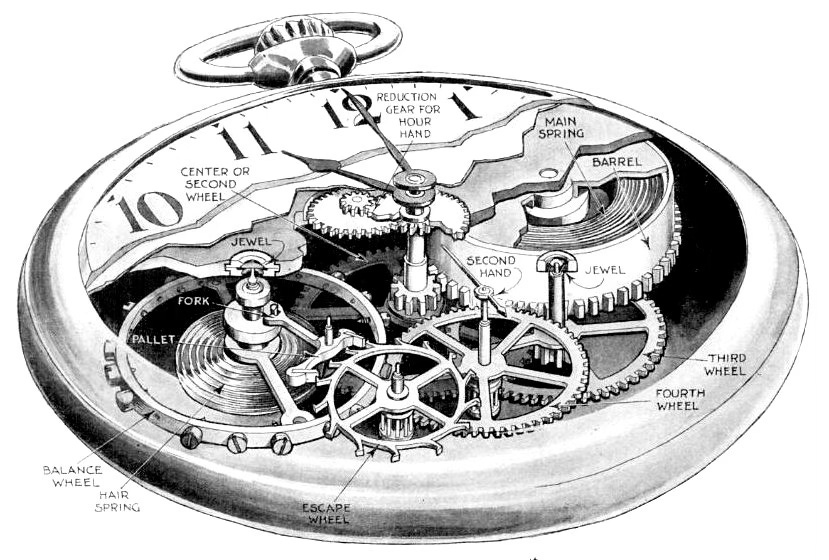

We don’t know exactly when this development took place, nor who was responsible for it, but it probably happened around the year 1400, inspired by crossbow-winding technology. The way these new clocks worked is as follows:

A long strip of steel, the mainspring, would be coiled inside a drum called the barrel

Winding the clock tightens the spring and stores energy in it

Stop winding and the spring tries to relax, releasing energy, and turning the barrel

The barrel then drives the gear train, escapement, and regulator, thus driving the clock hands.

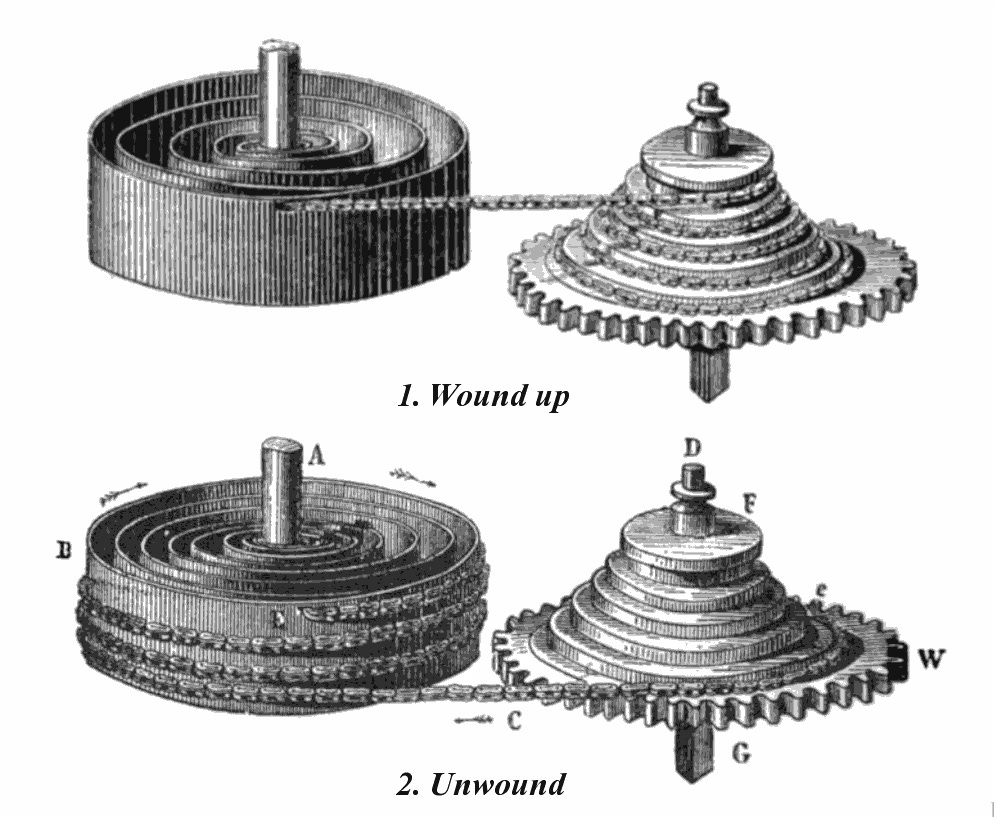

This alone is not enough though: the mainspring doesn’t pull with the same force all of the time. It is at its strongest when it is fully wound, and weakens as it runs down. The earliest spring clocks were inaccurate as a result, but this was resolved with the addition of the fusee. The fusee is a cone-shaped, grooved pulley that is connected to the barrel with a length of chain. When fully wound the chain is at the top of the cone, with the smallest radius, As it gradually winds down the chain descends down the cone on what are effectively larger and larger wheels. The lower pull of the weakened spring is offset by the increased torque multiplier of the larger wheels – think of gears on a bike. It is probably easiest to show this rather than try to explain it further:

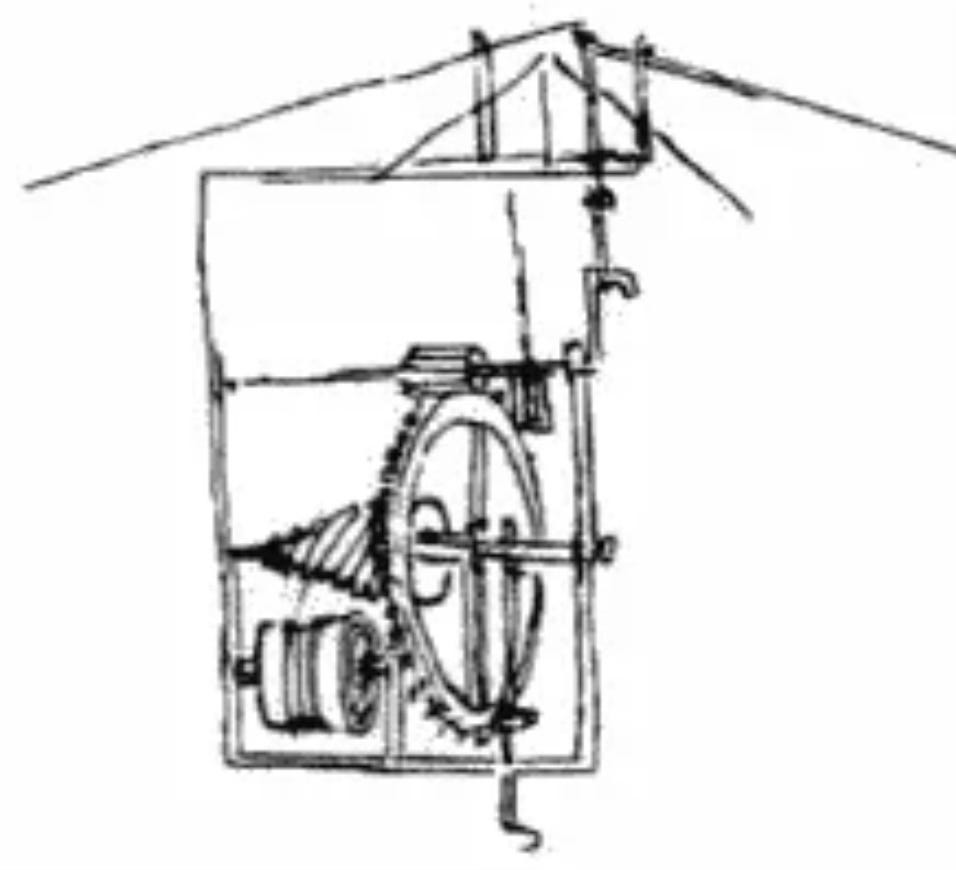

And here is another one in a spring-driven machine designed by Leonardo Da Vinci in around 1490:2

The difference that this made to the size of clocks can easily be seen in what is thought to be the oldest surviving spring-clock. This glorious contraption was made for Philip the Good,3 Duke of Burgundy, in around 1430:

The sharp-eyed amongst you will have noticed that this clock still only had an hour hand; whilst minutes were known about at this point, the clocks lacked the accuracy to measure them properly. It also didn’t really matter as such measurements had no real utility value – people were not using time measured that accurately to order their lives. The earliest reference we have to a clock with minutes shown on a dial is from an illustration of one in a manuscript by Paulus Almanus in 1475 but this still lacked the all-important hand. The generally accepted first instance of this is a clock made in 1577 by Jost Bürgi to allow for precise astronomical observations. Such devices were rarities however; well into the 17th century town clocks in England would only have an hour hand.

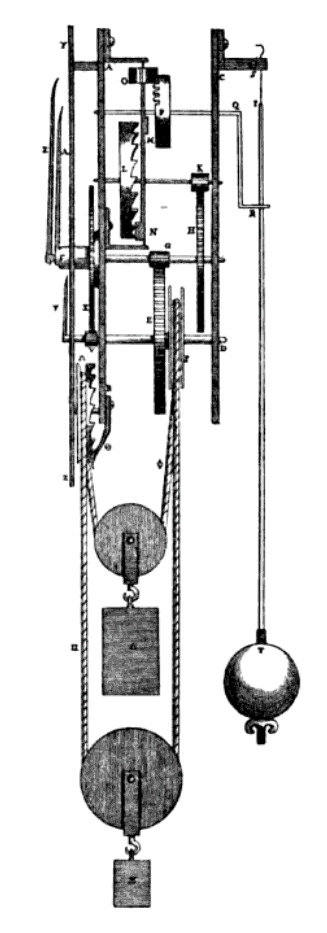

Weirdly the second hand may have come first, as one can be found on a clock dating from 1560, but rather than being a meaningful indicator of time, it would simply spin around the dial as a ready way of seeing if the clock was actually working or not. Again, even with these innovations the clocks were not accurate enough to meaningfully display such small divisions of time. Really accurate time measurement came with the invention of the pendulum clock by Christiaan Huygens (1629 - 1695) which happened on Christmas Day 1656.4 Galileo had come up with the idea of using a swinging bob to measure time some years earlier, but it was Huygens who came up with the mathematical formula that related pendulum length to the duration of the swing. Specifically he calculated that for a one-second movement the pendulum had to be 39.1 inches (approximately 99.4 cm) long.

In the centuries that followed numerous inventions drove both the accuracy and miniaturisation of clocks, with both things famously combining to allow for the correct calculation of longitude. There was however still something about the way people measured time that was very different from today. Okay, perhaps “measured” is the wrong term; “set” would be better. Until very recently in historical terms, time would vary from one town to the next. The reason for this was simple – each would set their clocks to the solar noon of their location. People wanted to know what the accurate time was for where they lived, they operated by local time. Time shifts by around four minutes per degree of longitude, so in my home town of Oxford, roughly 1.25° west of London, the solar time is around five minutes behind that of our capital city. To this day the bell “Great Tom” in Christ Church college (which you may remember was cast from the bronze of the Osney Abbey bell) chimes each evening at 9:05, respecting the original local time.

The fact that different towns, even ones fairly short distances apart, had different times didn’t really matter until the middle of the 19th century. If you were to meet someone at noon, then obviously it would be noon where they lived, and the fact that this might have been a few minutes earlier or later than the place you had come from was a trivial concern. What changed things was the introduction of both the telegraph and particularly the railways. If you were writing the timetable, would you set the time of a train’s arrival to the time from the place it had come from or the place that it had arrived at? Choose the first and people would be confused by the arrival time being out of kilter with the local clocks; choose the second and the duration of the train journey would be wrong. In Britain in the 1840s the railway companies began using Greenwich Mean Time (GMT) as the standard across their networks (my local train company, Great Western Railway, did so in November 1840, for example).

This standardisation of time was very much a thing driven by private enterprise, the railway networks and telegraph companies, rather than the government. Just because they were all using GMT didn’t mean that the locals were. This meant that there were decades where one had to think for a moment when deciding when to leave the house to catch a train. The time on the timetable would of course be GMT but that of the one on your mantelpiece could be showing the local time. Gradually convenience led to increasing adoption of GMT across the board, but it wasn’t until 1880, with the Statutes (Definition of Time) Act 1880 which required that expressions of time in Acts, deeds, and legal instruments were to be read as Greenwich Mean Time across all of Great Britain, that standard time was required by law.

The next challenge was international in nature. Standardising time within one country was hard enough, but global navigation, telegraphy, and commerce required agreement on a prime meridian and on a shared system for counting time. This culminated in the International Meridian Conference held in Washington in October 1884 where delegates were gathered specifically to consider a common prime meridian and a universal day. The conference adopted the meridian of Greenwich as the prime meridian for longitude and recommended a universal day beginning at midnight at Greenwich. Why Greenwich? Well in part it was due to Britain’s global power at the time, but also because of its maritime and scientific clout. The majority of the world’s shipping already reckoned longitude from Greenwich so this approach was pretty much the de facto standard before it was formally agreed.

The final piece of standardisation concerned the units of time themselves. For a long time, the second was effectively tied to the Earth’s rotation via the mean solar day: 1 second was treated as 1/86,400 of a day. But astronomers and physicists discovered that the Earth’s rotation is not perfectly uniform – basically not all days are quite the same length. Before 1960 the second had been defined as a fraction of the mean solar day, but irregularities in Earth rotation made this unsatisfactory. In 1960 the second was redefined astronomically using the tropical year 1900, however this was still not accurate enough for the modern age. In 1967, the General Conference on Weights and Measures redefined the second in atomic terms, as 9,192,631,770 cycles of the radiation corresponding to the hyperfine transition of caesium-133.

There was still a final problem to solve. Civil time still needed to stay reasonably aligned with day and night, which depend on the Earth’s actual rotation. But atomic time runs uniformly, whereas Earth rotation drifts slightly. The compromise was Coordinated Universal Time (UTC). UTC runs at the same rate as International Atomic Time but differs from it by an integral number of seconds. What that practically means is the insertion of leap seconds, the first of which was inserted on 30 June 1972 to keep UTC within 0.9 seconds of UT1, a form of time based on the Earth’s rotation.

When I started researching this series, I didn’t think that I would cover history all the way from clay tablets to atomic clocks. But I absolutely love the fact that some of the most precise devices in existence today are measuring something whose definition can be traced back to 5,000-year-old cuneiform characters!

He wasn’t unique Hipparchus and other Greek astronomers also used Babylonian sexagesimal methods

No, I have no idea what it is. Some kind of spring-powered helicopter perhaps?

I fear that the term “good” is somewhat subjective here. I suspect that the French in general, and Joan of Arc in particular, would have described him differently after his soldiers captured her and ransomed her to the English. Who promptly burned her alive…

Yes, he really built his first test model on Christmas Day. No, he didn't have children.

Another factor in standardizing time was the conjunction of telegraphs and meteorology.

If you wanted to correlate the weather reports and trace a storm, you needed to know the reports' relative time.

Love it. Time is fascinating, especially how human beings learns to calculate time accurately in the long history. Thanks for hard work.💕